spgl1.spgl1

- spgl1.spgl1(A, b, tau=0, sigma=0, x0=None, fid=None, verbosity=0, iter_lim=None, n_prev_vals=3, bp_tol=1e-06, ls_tol=1e-06, opt_tol=0.0001, dec_tol=0.0001, step_min=1e-16, step_max=100000.0, active_set_niters=inf, subspace_min=False, iscomplex=False, max_matvec=inf, weights=None, project=<function _norm_l1_project>, primal_norm=<function _norm_l1_primal>, dual_norm=<function _norm_l1_dual>)[source]

SPGL1 solver.

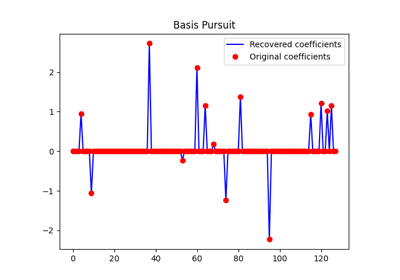

Solve basis pursuit (BP), basis pursuit denoise (BPDN), or LASSO problems [1] [2] depending on the choice of

tauandsigma:(BP) minimize ||x||_1 subj. to Ax = b (BPDN) minimize ||x||_1 subj. to ||Ax-b||_2 <= sigma (LASSO) minimize ||Ax-b||_2 subj, to ||x||_1 <= tau

The matrix

Amay be square or rectangular (over-determined or under-determined), and may have any rank.- Parameters

- A{sparse matrix, ndarray, LinearOperator}

Representation of an m-by-n matrix. It is required that the linear operator can produce

AxandA^T x.- barray_like, shape (m,)

Right-hand side vector

b.- taufloat, optional

LASSO threshold. If different from

None, spgl1 solves LASSO problem- sigmafloat, optional

BPDN threshold. If different from

None, spgl1 solves BPDN problem- x0array_like, shape (n,), optional

Initial guess of x, if None zeros are used.

- fidfile, optional

File ID to direct log output, if None print on screen.

- verbosityint, optional

0=quiet, 1=some output, 2=more output.

- iter_limint, optional

Max. number of iterations (default if

10*m).- n_prev_valsint, optional

Line-search history lenght.

- bp_tolfloat, optional

Tolerance for identifying a basis pursuit solution.

- ls_tolfloat, optional

Tolerance for least-squares solution. Iterations are stopped when the ratio between the dual norm of the gradient and the L2 norm of the residual becomes smaller or equal to

ls_tol.- opt_tolfloat, optional

Optimality tolerance. More specifically, when using basis pursuit denoise, the optimility condition is met when the absolute difference between the L2 norm of the residual and the

sigmais smaller thanopt_tol.- dec_tolfloat, optional

Required relative change in primal objective for Newton. Larger

decTolmeans more frequent Newton updates.- step_minfloat, optional

Minimum spectral step.

- step_maxfloat, optional

Maximum spectral step.

- active_set_nitersfloat, optional

Maximum number of iterations where no change in support is tolerated. Exit with EXIT_ACTIVE_SET if no change is observed for

activeSetItiterations- subspace_minbool, optional

Subspace minimization (

True) or not (False)- iscomplexbool, optional

Problem with complex variables (

True) or not (False)- max_matvecint, optional

Maximum matrix-vector multiplies allowed

- weights{float, ndarray}, optional

Weights

Win||Wx||_1- projectfunc, optional

Projection function

- primal_normfunc, optional

Primal norm evaluation fun

- dual_normfunc, optional

Dual norm eval function

- Returns

- xarray_like, shape (n,)

Inverted model

- rarray_like, shape (m,)

Final residual

- garray_like, shape (h,)

Final gradient

- infodict

Dictionary with the following information:

tau, final value of tau (see sigma above)rnorm, two-norm of the optimal residualrgap, relative duality gap (an optimality measure)gnorm, Lagrange multiplier of (LASSO)stat,1: found a BPDN solution,2: found a BP solution; exit based on small gradient,3: found a BP solution; exit based on small residual,4: found a LASSO solution,5: error: too many iterations,6: error: linesearch failed,7: error: found suboptimal BP solution,8: error: too many matrix-vector products

niters, number of iterationsnProdA, number of multiplications with AnProdAt, number of multiplications with A’n_newton, number of Newton stepstime_project, projection time (seconds)time_matprod, matrix-vector multiplications time (seconds)time_total, total solution time (seconds)niters_lsqr, number of lsqr iterations (ifsubspace_min=True)xnorm1, L1-norm model solution history through iterationsrnorm2, L2-norm residual history through iterationslambdaa, Lagrange multiplier history through iterations

References

- 1

E. van den Berg and M. P. Friedlander, “Probing the Pareto frontier for basis pursuit solutions”, SIAM J. on Scientific Computing, 31(2):890-912. (2008).

- 2

E. van den Berg and M. P. Friedlander, “Sparse optimization with least-squares constraints”, Tech. Rep. TR-2010-02, Dept of Computer Science, Univ of British Columbia (2010).